Getting started with DoWhy: A simple example

This is a quick introduction to the DoWhy causal inference library. We will load in a sample dataset and estimate the causal effect of a (pre-specified)treatment variable on a (pre-specified) outcome variable.

First, let us add the required path for Python to find the DoWhy code and load all required packages.

[1]:

import os, sys

sys.path.append(os.path.abspath("../../../"))

Let’s check the python version.

[2]:

print(sys.version)

3.6.9 (default, Nov 7 2019, 10:44:02)

[GCC 8.3.0]

[3]:

import numpy as np

import pandas as pd

import dowhy

from dowhy import CausalModel

import dowhy.datasets

Now, let us load a dataset. For simplicity, we simulate a dataset with linear relationships between common causes and treatment, and common causes and outcome.

Beta is the true causal effect.

[4]:

data = dowhy.datasets.linear_dataset(beta=10,

num_common_causes=5,

num_instruments = 2,

num_effect_modifiers=1,

num_samples=10000,

treatment_is_binary=True)

df = data["df"]

print(df.head())

print(data["dot_graph"])

print("\n")

print(data["gml_graph"])

X0 Z0 Z1 W0 W1 W2 W3 W4 \

0 2.583198 1.0 0.183011 -2.490799 -0.270172 -0.013538 0.979027 -0.033464

1 0.712234 1.0 0.150498 -0.892550 0.878099 0.137869 0.872547 1.138340

2 0.431597 0.0 0.680770 -1.194290 -0.743686 0.409159 -0.410003 0.378746

3 0.715099 1.0 0.129770 -0.260848 -0.779302 1.038961 1.078510 -0.430214

4 -0.285593 1.0 0.480503 0.933601 -0.287256 0.524709 0.602599 -0.139902

v0 y

0 True 2.320453

1 True 13.069987

2 True 2.154850

3 True 14.552760

4 True 17.646749

digraph { U[label="Unobserved Confounders"]; U->y;v0->y; U->v0;W0-> v0; W1-> v0; W2-> v0; W3-> v0; W4-> v0;Z0-> v0; Z1-> v0;W0-> y; W1-> y; W2-> y; W3-> y; W4-> y;X0-> y;}

graph[directed 1node[ id "y" label "y"]node[ id "Unobserved Confounders" label "Unobserved Confounders"]edge[source "Unobserved Confounders" target "y"]node[ id "W0" label "W0"] node[ id "W1" label "W1"] node[ id "W2" label "W2"] node[ id "W3" label "W3"] node[ id "W4" label "W4"]node[ id "Z0" label "Z0"] node[ id "Z1" label "Z1"]node[ id "v0" label "v0"]edge[source "v0" target "y"]edge[source "Unobserved Confounders" target "v0"]edge[ source "W0" target "v0"] edge[ source "W1" target "v0"] edge[ source "W2" target "v0"] edge[ source "W3" target "v0"] edge[ source "W4" target "v0"]edge[ source "Z0" target "v0"] edge[ source "Z1" target "v0"]edge[ source "W0" target "y"] edge[ source "W1" target "y"] edge[ source "W2" target "y"] edge[ source "W3" target "y"] edge[ source "W4" target "y"]node[ id "X0" label "X0"] edge[ source "X0" target "y"]]

Note that we are using a pandas dataframe to load the data. At present, DoWhy only supports pandas dataframe as input.

Interface 1 (recommended): Input causal graph

We now input a causal graph in the GML graph format (recommended). You can also use the DOT format.

[5]:

# With graph

model=CausalModel(

data = df,

treatment=data["treatment_name"],

outcome=data["outcome_name"],

graph=data["gml_graph"]

)

INFO:dowhy.causal_model:Model to find the causal effect of treatment ['v0'] on outcome ['y']

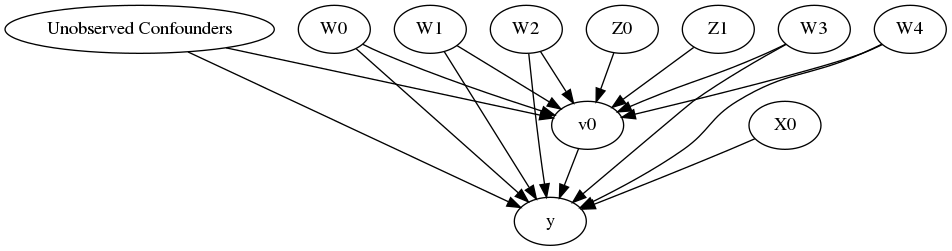

[6]:

model.view_model()

[7]:

from IPython.display import Image, display

display(Image(filename="causal_model.png"))

The above causal graph shows the assumptions encoded in the causal model. We can now use this graph to first identify the causal effect (go from a causal estimand to a probability expression), and then estimate the causal effect.

DoWhy philosophy: Keep identification and estimation separate

Identification can be achieved without access to the data, acccesing only the graph. This results in an expression to be computed. This expression can then be evaluated using the available data in the estimation step. It is important to understand that these are orthogonal steps.

Identification

[8]:

identified_estimand = model.identify_effect()

print(identified_estimand)

INFO:dowhy.causal_identifier:Common causes of treatment and outcome:['W0', 'W1', 'W4', 'Unobserved Confounders', 'W2', 'W3']

WARNING:dowhy.causal_identifier:If this is observed data (not from a randomized experiment), there might always be missing confounders. Causal effect cannot be identified perfectly.

WARN: Do you want to continue by ignoring any unobserved confounders? (use proceed_when_unidentifiable=True to disable this prompt) [y/n] y

INFO:dowhy.causal_identifier:Instrumental variables for treatment and outcome:['Z1', 'Z0']

Estimand type: nonparametric-ate

### Estimand : 1

Estimand name: backdoor

Estimand expression:

d

─────(Expectation(y|W0,W1,W4,W2,W3))

d[v₀]

Estimand assumption 1, Unconfoundedness: If U→{v0} and U→y then P(y|v0,W0,W1,W4,W2,W3,U) = P(y|v0,W0,W1,W4,W2,W3)

### Estimand : 2

Estimand name: iv

Estimand expression:

Expectation(Derivative(y, [Z1, Z0])*Derivative([v0], [Z1, Z0])**(-1))

Estimand assumption 1, As-if-random: If U→→y then ¬(U →→{Z1,Z0})

Estimand assumption 2, Exclusion: If we remove {Z1,Z0}→{v0}, then ¬({Z1,Z0}→y)

If you want to disable the warning for ignoring unobserved confounders, you can add a parameter flag ( proceed_when_unidentifiable ). The same parameter can also be added when instantiating the CausalModel object.

[9]:

identified_estimand = model.identify_effect(proceed_when_unidentifiable=True)

print(identified_estimand)

INFO:dowhy.causal_identifier:Common causes of treatment and outcome:['W0', 'W1', 'W4', 'Unobserved Confounders', 'W2', 'W3']

WARNING:dowhy.causal_identifier:If this is observed data (not from a randomized experiment), there might always be missing confounders. Causal effect cannot be identified perfectly.

INFO:dowhy.causal_identifier:Continuing by ignoring these unobserved confounders because proceed_when_unidentifiable flag is True.

INFO:dowhy.causal_identifier:Instrumental variables for treatment and outcome:['Z1', 'Z0']

Estimand type: nonparametric-ate

### Estimand : 1

Estimand name: backdoor

Estimand expression:

d

─────(Expectation(y|W0,W1,W4,W2,W3))

d[v₀]

Estimand assumption 1, Unconfoundedness: If U→{v0} and U→y then P(y|v0,W0,W1,W4,W2,W3,U) = P(y|v0,W0,W1,W4,W2,W3)

### Estimand : 2

Estimand name: iv

Estimand expression:

Expectation(Derivative(y, [Z1, Z0])*Derivative([v0], [Z1, Z0])**(-1))

Estimand assumption 1, As-if-random: If U→→y then ¬(U →→{Z1,Z0})

Estimand assumption 2, Exclusion: If we remove {Z1,Z0}→{v0}, then ¬({Z1,Z0}→y)

Estimation

[10]:

causal_estimate = model.estimate_effect(identified_estimand,

method_name="backdoor.propensity_score_stratification")

print(causal_estimate)

print("Causal Estimate is " + str(causal_estimate.value))

INFO:dowhy.causal_estimator:INFO: Using Propensity Score Stratification Estimator

INFO:dowhy.causal_estimator:b: y~v0+W0+W1+W4+W2+W3

/home/amshar/python-environments/vpy36/lib/python3.6/site-packages/sklearn/utils/validation.py:744: DataConversionWarning: A column-vector y was passed when a 1d array was expected. Please change the shape of y to (n_samples, ), for example using ravel().

y = column_or_1d(y, warn=True)

*** Causal Estimate ***

## Target estimand

Estimand type: nonparametric-ate

### Estimand : 1

Estimand name: backdoor

Estimand expression:

d

─────(Expectation(y|W0,W1,W4,W2,W3))

d[v₀]

Estimand assumption 1, Unconfoundedness: If U→{v0} and U→y then P(y|v0,W0,W1,W4,W2,W3,U) = P(y|v0,W0,W1,W4,W2,W3)

### Estimand : 2

Estimand name: iv

Estimand expression:

Expectation(Derivative(y, [Z1, Z0])*Derivative([v0], [Z1, Z0])**(-1))

Estimand assumption 1, As-if-random: If U→→y then ¬(U →→{Z1,Z0})

Estimand assumption 2, Exclusion: If we remove {Z1,Z0}→{v0}, then ¬({Z1,Z0}→y)

## Realized estimand

b: y~v0+W0+W1+W4+W2+W3

## Estimate

Value: 9.86863892333762

Causal Estimate is 9.86863892333762

You can input additional parameters to the estimate_effect method. For instance, to estimate the effect on any subset of the units, you can specify the “target_units” parameter which can be a string (“ate”, “att”, or “atc”), lambda function that filters rows of the data frame, or a new dataframe on which to compute the effect. You can also specify “effect modifiers” to estimate heterogeneous effects across these variables. See help(CausalModel.estimate_effect).

[11]:

# Causal effect on the control group (ATC)

causal_estimate_att = model.estimate_effect(identified_estimand,

method_name="backdoor.propensity_score_stratification",

target_units = "atc")

print(causal_estimate_att)

print("Causal Estimate is " + str(causal_estimate_att.value))

INFO:dowhy.causal_estimator:INFO: Using Propensity Score Stratification Estimator

INFO:dowhy.causal_estimator:b: y~v0+W0+W1+W4+W2+W3

/home/amshar/python-environments/vpy36/lib/python3.6/site-packages/sklearn/utils/validation.py:744: DataConversionWarning: A column-vector y was passed when a 1d array was expected. Please change the shape of y to (n_samples, ), for example using ravel().

y = column_or_1d(y, warn=True)

*** Causal Estimate ***

## Target estimand

Estimand type: nonparametric-ate

### Estimand : 1

Estimand name: backdoor

Estimand expression:

d

─────(Expectation(y|W0,W1,W4,W2,W3))

d[v₀]

Estimand assumption 1, Unconfoundedness: If U→{v0} and U→y then P(y|v0,W0,W1,W4,W2,W3,U) = P(y|v0,W0,W1,W4,W2,W3)

### Estimand : 2

Estimand name: iv

Estimand expression:

Expectation(Derivative(y, [Z1, Z0])*Derivative([v0], [Z1, Z0])**(-1))

Estimand assumption 1, As-if-random: If U→→y then ¬(U →→{Z1,Z0})

Estimand assumption 2, Exclusion: If we remove {Z1,Z0}→{v0}, then ¬({Z1,Z0}→y)

## Realized estimand

b: y~v0+W0+W1+W4+W2+W3

## Estimate

Value: 10.280769761076874

Causal Estimate is 10.280769761076874

Interface 2: Specify common causes and instruments

[12]:

# Without graph

model= CausalModel(

data=df,

treatment=data["treatment_name"],

outcome=data["outcome_name"],

common_causes=data["common_causes_names"],

effect_modifiers=data["effect_modifier_names"])

WARNING:dowhy.causal_model:Causal Graph not provided. DoWhy will construct a graph based on data inputs.

INFO:dowhy.causal_graph:If this is observed data (not from a randomized experiment), there might always be missing confounders. Adding a node named "Unobserved Confounders" to reflect this.

INFO:dowhy.causal_model:Model to find the causal effect of treatment ['v0'] on outcome ['y']

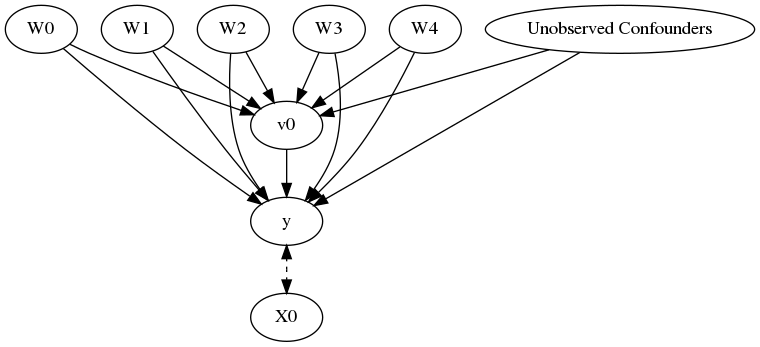

[13]:

model.view_model()

[14]:

from IPython.display import Image, display

display(Image(filename="causal_model.png"))

We get the same causal graph. Now identification and estimation is done as before.

[15]:

identified_estimand = model.identify_effect(proceed_when_unidentifiable=True)

INFO:dowhy.causal_identifier:Common causes of treatment and outcome:['W0', 'W1', 'W4', 'U', 'W2', 'W3']

WARNING:dowhy.causal_identifier:If this is observed data (not from a randomized experiment), there might always be missing confounders. Causal effect cannot be identified perfectly.

INFO:dowhy.causal_identifier:Continuing by ignoring these unobserved confounders because proceed_when_unidentifiable flag is True.

INFO:dowhy.causal_identifier:Instrumental variables for treatment and outcome:[]

Estimation

[16]:

estimate = model.estimate_effect(identified_estimand,

method_name="backdoor.propensity_score_stratification")

print(estimate)

print("Causal Estimate is " + str(estimate.value))

INFO:dowhy.causal_estimator:INFO: Using Propensity Score Stratification Estimator

INFO:dowhy.causal_estimator:b: y~v0+W0+W1+W4+W2+W3

/home/amshar/python-environments/vpy36/lib/python3.6/site-packages/sklearn/utils/validation.py:744: DataConversionWarning: A column-vector y was passed when a 1d array was expected. Please change the shape of y to (n_samples, ), for example using ravel().

y = column_or_1d(y, warn=True)

*** Causal Estimate ***

## Target estimand

Estimand type: nonparametric-ate

### Estimand : 1

Estimand name: backdoor

Estimand expression:

d

─────(Expectation(y|W0,W1,W4,W2,W3))

d[v₀]

Estimand assumption 1, Unconfoundedness: If U→{v0} and U→y then P(y|v0,W0,W1,W4,W2,W3,U) = P(y|v0,W0,W1,W4,W2,W3)

### Estimand : 2

Estimand name: iv

No such variable found!

## Realized estimand

b: y~v0+W0+W1+W4+W2+W3

## Estimate

Value: 9.86863892333762

Causal Estimate is 9.86863892333762

Refuting the estimate

Let us now look at ways of refuting the estimate obtained.

Adding a random common cause variable

[17]:

res_random=model.refute_estimate(identified_estimand, estimate, method_name="random_common_cause")

print(res_random)

INFO:dowhy.causal_estimator:INFO: Using Propensity Score Stratification Estimator

INFO:dowhy.causal_estimator:b: y~v0+W0+W1+W4+W2+W3+w_random

/home/amshar/python-environments/vpy36/lib/python3.6/site-packages/sklearn/utils/validation.py:744: DataConversionWarning: A column-vector y was passed when a 1d array was expected. Please change the shape of y to (n_samples, ), for example using ravel().

y = column_or_1d(y, warn=True)

Refute: Add a Random Common Cause

Estimated effect:(9.86863892333762,)

New effect:(9.92981702371751,)

Adding an unobserved common cause variable

[18]:

res_unobserved=model.refute_estimate(identified_estimand, estimate, method_name="add_unobserved_common_cause",

confounders_effect_on_treatment="binary_flip", confounders_effect_on_outcome="linear",

effect_strength_on_treatment=0.01, effect_strength_on_outcome=0.02)

print(res_unobserved)

INFO:dowhy.causal_estimator:INFO: Using Propensity Score Stratification Estimator

INFO:dowhy.causal_estimator:b: y~v0+W0+W1+W4+W2+W3

/home/amshar/python-environments/vpy36/lib/python3.6/site-packages/sklearn/utils/validation.py:744: DataConversionWarning: A column-vector y was passed when a 1d array was expected. Please change the shape of y to (n_samples, ), for example using ravel().

y = column_or_1d(y, warn=True)

Refute: Add an Unobserved Common Cause

Estimated effect:(9.86863892333762,)

New effect:(8.764065117773479,)

Replacing treatment with a random (placebo) variable

[19]:

res_placebo=model.refute_estimate(identified_estimand, estimate,

method_name="placebo_treatment_refuter", placebo_type="permute")

print(res_placebo)

INFO:dowhy.causal_estimator:INFO: Using Propensity Score Stratification Estimator

INFO:dowhy.causal_estimator:b: y~placebo+W0+W1+W4+W2+W3

/home/amshar/python-environments/vpy36/lib/python3.6/site-packages/sklearn/utils/validation.py:744: DataConversionWarning: A column-vector y was passed when a 1d array was expected. Please change the shape of y to (n_samples, ), for example using ravel().

y = column_or_1d(y, warn=True)

Refute: Use a Placebo Treatment

Estimated effect:(9.86863892333762,)

New effect:(0.786272901449524,)

Removing a random subset of the data

[20]:

res_subset=model.refute_estimate(identified_estimand, estimate,

method_name="data_subset_refuter", subset_fraction=0.9)

print(res_subset)

INFO:dowhy.causal_estimator:INFO: Using Propensity Score Stratification Estimator

INFO:dowhy.causal_estimator:b: y~v0+W0+W1+W4+W2+W3

/home/amshar/python-environments/vpy36/lib/python3.6/site-packages/sklearn/utils/validation.py:744: DataConversionWarning: A column-vector y was passed when a 1d array was expected. Please change the shape of y to (n_samples, ), for example using ravel().

y = column_or_1d(y, warn=True)

Refute: Use a subset of data

Estimated effect:(9.86863892333762,)

New effect:(10.302546340220411,)

As you can see, the propensity score stratification estimator is reasonably robust to refutations. For reproducibility, you can add a parameter “random_seed” to any refutation method, as shown below.

[21]:

res_subset=model.refute_estimate(identified_estimand, estimate,

method_name="data_subset_refuter", subset_fraction=0.9, random_seed = 1)

print(res_subset)

INFO:dowhy.causal_estimator:INFO: Using Propensity Score Stratification Estimator

INFO:dowhy.causal_estimator:b: y~v0+W0+W1+W4+W2+W3

/home/amshar/python-environments/vpy36/lib/python3.6/site-packages/sklearn/utils/validation.py:744: DataConversionWarning: A column-vector y was passed when a 1d array was expected. Please change the shape of y to (n_samples, ), for example using ravel().

y = column_or_1d(y, warn=True)

Refute: Use a subset of data

Estimated effect:(9.86863892333762,)

New effect:(9.915759961476407,)