Testing Assumptions in model with DoWhy: A simple example

This is a quick introduction to how we can test if our assumed graph is correct and the assumptions match with the dataset. We do so by checking the conditional independences in the graph and see if they hold true for the data as well. Currently we are using partial correlation to test continuous data and conditional mutual information to test discrete data.

First, let us load all required packages.

[1]:

%load_ext autoreload

%autoreload 2

[2]:

import numpy as np

import pandas as pd

import os, sys

sys.path.append(os.path.abspath("../../../"))

import dowhy

from dowhy import CausalModel

import dowhy.datasets

Step 1: Load dataset

The function dataset_from_random_graph(num_vars, num_samples, prob_edge, random_seed, prob_type_data) can be used to create a dataset from a randomly generated directed graph. The data can be mixture of discrete, binary and continuous variables. The parameters have the following significance - - num_vars : Number of variables in the dataset - num_samples: Number of samples in the dataset - prob_edge : Probability of an edge between two random nodes in a graph - random_seed: Seed for

generating random graph - prob_type_of_data : 3-element tuple containing the probability of data being discrete, binary and continuous respectively.(Should add up to 1)

[3]:

data = dowhy.datasets.dataset_from_random_graph(num_vars = 10,

num_samples = 5000,

prob_edge = 0.3,

random_seed = 100,

prob_type_of_data = (0.333, 0.333, 0.334))

df = data["df"] #Insert dataset here

print(data["discrete_columns"], data["continuous_columns"], data["binary_columns"])

print(df.head())

['a', 'c', 'e', 'f', 'h'] ['b', 'd', 'g', 'i', 'j'] ['c', 'e']

a b c d e f g h i j

0 -1 -1.170054 1 0.996507 1 0 -0.218214 -1 -1.107993 0.699933

1 0 0.527130 1 0.894435 1 1 1.485562 0 -1.450727 -1.055649

2 0 0.436049 0 -0.151908 0 0 0.195296 0 2.280391 1.162284

3 0 0.005213 1 0.347139 1 0 -0.727156 -1 -1.140999 -2.165819

4 0 1.212397 0 -1.230150 0 1 0.582913 0 -1.377051 -2.359753

Note that we are using a pandas dataframe to load the data. At present, DoWhy only supports pandas dataframe as input.

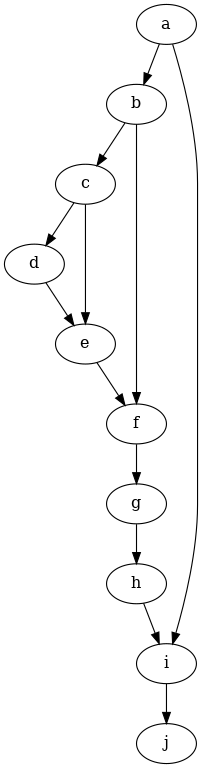

Step 2: Input causal graph

We now input a causal graph. You can do that in the GML graph format (recommended), DOT format or the output from daggity - To create the causal graph for your dataset, you can use a tool like DAGitty that provides a GUI to construct the graph. You can export the graph string that it generates.

[4]:

graph_string = """graph [

directed 1

node [

id 0

label "a"

]

node [

id 1

label "b"

]

node [

id 2

label "c"

]

node [

id 3

label "d"

]

node [

id 4

label "e"

]

node [

id 5

label "f"

]

node [

id 6

label "g"

]

node [

id 7

label "h"

]

node [

id 8

label "i"

]

node [

id 9

label "j"

]

edge [

source 0

target 1

]

edge [

source 0

target 8

]

edge [

source 1

target 2

]

edge [

source 1

target 5

]

edge [

source 2

target 3

]

edge [

source 2

target 4

]

edge [

source 3

target 4

]

edge [

source 4

target 5

]

edge [

source 5

target 6

]

edge [

source 6

target 7

]

edge [

source 7

target 8

]

edge [

source 8

target 9

]

]

"""

Step 3: Create Causal Model

[5]:

model=CausalModel(

data = df,

treatment=data["treatment_name"],

outcome=data["outcome_name"],

graph=graph_string

)

[6]:

model.view_model()

[7]:

from IPython.display import Image, display

display(Image(filename="causal_model.png"))

Step 4: Testing for Conditional Independence

We can check if the assumptions of the graph hold true for the data using model.refute_graph(k, independence_test = {'test_for_continuous': 'partial_correlation', 'test_for_discrete' : 'conditional_mutual_information'}) We are testing X ⫫ Y | Z where X and Y are singular sets and Z can have k number of variables. k value is 1 by default unless input. Currently we are using following settings - - “partial_correlation” for continuous data - “conditional_mutual_information” for discrete data -

“conditional_mutua_information” when Z is discrete and either of X and Y is continuous - “partial_correlation” when Z is continuous/binary and X and Y are either continuous/binary - “conditional_mutual_information” when X and Y are discrete and Z has mixed data - other settings are currently not supported

[8]:

refuter_object = model.refute_graph(k=1, independence_test = {'test_for_continuous': 'partial_correlation', 'test_for_discrete' : 'conditional_mutual_information'}) #Change k parameter to test conditional independence given different number of variables

[9]:

print(refuter_object)

Method name for discrete data:conditional_mutual_information

Method name for continuous data:partial_correlation

Number of conditional independencies entailed by model:34

Number of independences satisfied by data:25

Test passed:True

Testing for a set of edges

We can also test a set of conditional independences whether they are true or not The input has to be in the form - [( x1, y1, (z1, z2)), ( x2, y2, (z3, z4)), ( x3, y3, (z5,)), ( x4, y4, ()) ] ##### The testing data can be a mix of discrete and continuous types as well (Here binary implies discrete only) -

[10]:

refuter_object = model.refute_graph(independence_constraints = [('c', 'e' , ('g',)), # c and e - binary, g - continuous

('f', 'h' , ('b',)), # f and h - discrete, b - continuous

('e', 'g' , ('h',)), # e - binary, g - continuous, h - discrete

('c', 'a' , ('b',)), # c and a - discrete, b - continuous

('d', 'i' , ('c',)), # d and i - continuous, c - binary

('a', 'j' , ()) # a - discrete, j - continuous

],

independence_test = {'test_for_continuous': 'partial_correlation', 'test_for_discrete' : 'conditional_mutual_information'}

)

[11]:

print(refuter_object)

Method name for discrete data:conditional_mutual_information

Method name for continuous data:partial_correlation

Number of conditional independencies entailed by model:6

Number of independences satisfied by data:6

Test passed:True

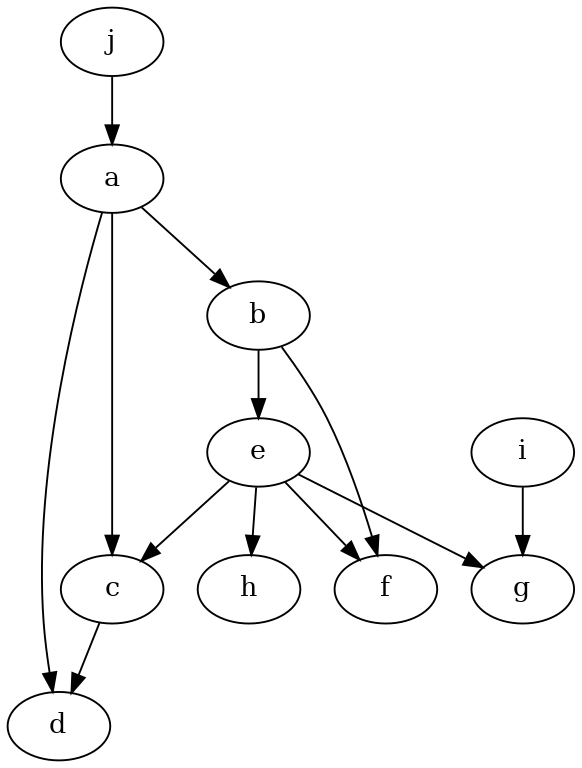

Testing with a wrong graph input

[12]:

graph_string = """graph [

directed 1

node [

id 0

label "a"

]

node [

id 1

label "b"

]

node [

id 2

label "c"

]

node [

id 3

label "d"

]

node [

id 4

label "e"

]

node [

id 5

label "f"

]

node [

id 6

label "g"

]

node [

id 7

label "h"

]

node [

id 8

label "i"

]

node [

id 9

label "j"

]

edge [

source 0

target 1

]

edge [

source 0

target 2

]

edge [

source 0

target 3

]

edge [

source 1

target 4

]

edge [

source 1

target 5

]

edge [

source 2

target 3

]

edge [

source 4

target 2

]

edge [

source 4

target 5

]

edge [

source 4

target 6

]

edge [

source 4

target 7

]

edge [

source 8

target 6

]

edge

[

source 9

target 0

]

]"""

[13]:

model = CausalModel(

data=df,

treatment=data["treatment_name"],

outcome=data["outcome_name"],

graph=graph_string,

)

[14]:

model.view_model()

[15]:

from IPython.display import Image, display

display(Image(filename="causal_model.png"))

[16]:

refuter_object = model.refute_graph(k=2)

We can see that since we input the wrong graph, many conditional independences were not met

[17]:

print(refuter_object)

Method name for discrete data:conditional_mutual_information

Method name for continuous data:partial_correlation

Number of conditional independencies entailed by model:359

Number of independences satisfied by data:144

Test passed:False

[ ]: